Whether you are deeply involved in AI developments, interested in AI governance, or looking to understand the impact of AI on our society, this event on ‘Unpacking EU AI Act’ will provide you with valuable insights and information.

The AI for Good Global Summit is the leading action-oriented United Nations platform promoting AI to advance health, climate, gender, inclusive prosperity, sustainable infrastructure, and other global development priorities. Professor Ebrahimi, affiliated to C4DT, is as speaker at this event!

The WSIS+20 Forum High-Level Event will mark a significant milestone of twenty years of progress made in the implementation of the outcomes of the World Summit on the Information Society, which took place in two phases — Geneva in 2003 and Tunis in 2005. Twenty years ago WSIS set the framework for global digital cooperation with a vision to build people-centric, inclusive, and development-oriented information and knowledge societies.

Intersection between foundation models and reinforcement learning

[Lang : Fr][letemps.ch] Censuré en Inde, en lutte avec le système judiciaire brésilien et au cœur de la campagne présidentielle américaine, le réseau social d’Elon Musk est associé de près au débat démocratique. Deux experts analysent son influence.

Inspired by the panel session at this year’s “AI House” panel session on “Transparency in Artificial Intelligence”, this write up very informally summarizes Imad Aad’s thoughts about transparency and trust in AI. It is aimed at readers with all backgrounds, including those who had little or no exposure to AI so far.

A new EPFL study has demonstrated the persuasive power of Large Language Models, finding that participants debating GPT-4 with access to their personal information were far more likely to change their opinion compared to those who debated humans.

This project underscores the need for a paradigm shift in data privacy policies, acknowledging the inherent trade-off between data utility and privacy that current Privacy Enhancing Technologies (PETs) cannot fully mitigate. It highlights the limitations of PETs and the systemic responsibility issues within the data supply chain, where technology producers often evade accountability. Consequently, a shift towards a data-use case-centric evaluation framework is recommended, one that prioritizes utility while minimizing leakage through nuanced risk assessments. Finally, the porject calls for greater transparency and a redefined accountability structure in the data sharing ecosystem.

David Atienza, head of the Embedded Systems Lab in the School of Engineering, has been named Editor-in-Chief of the journal Computing Surveys (CSUR) of the Association for Computing Machinery (ACM).

The Trust & Finance Forum, an event taking place at the Global Center for Security Policy (GCSP) in Geneva, on May 1st of 2024, to delve into the intersections of trust and finance. We’ll discuss digital trust trends, and explore new opportunities through real success stories in finance.

Our new C4DT Digital Governance Book Review is out! This time: Anu Bradford (2023) “Digital Empires. The Global Battle to Regulate Technology.” In her new book, Bradford offers an in-depth, objective analysis of the three dominant regulatory models for digitalization—market-driven by the US, state-driven by China, and citizen-driven by the EU—and their global impacts on (…)

This book offers an in-depth, objective analysis of the three dominant regulatory models for digitalization—market-driven by the US, state-driven by China, and citizen-driven by the EU—and their global impacts on data, digital platforms, and the internet, highlighting the current geo-political struggles and possible future scenarios.

The slowdown in Moore’s Law has pushed high-end GPUs towards narrow number formats to improve logic density. This introduces new challenges for accurate Deep Neural Network (DNN) training and inference. Our research aims to bring novel solutions to the challenges introduced by ubiquitous ever-growing DNN models and datasets. Our proposal targets building DNN platforms that are optimal in performance/Watt across a broad class of workloads and improve utility by unifying the infrastructure for both training models and inference tasks.

The C4DT Factory team selected some Privacy Enhancing Technology (PET) links for you. They are all related to digital trust: security, privacy, trust in general, we have you covered!

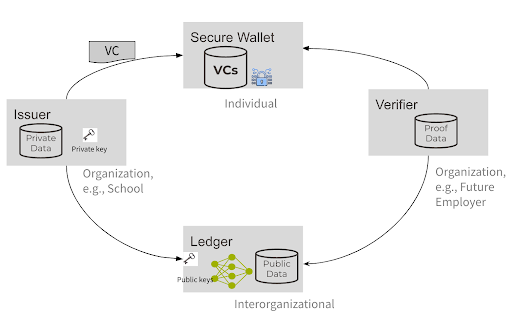

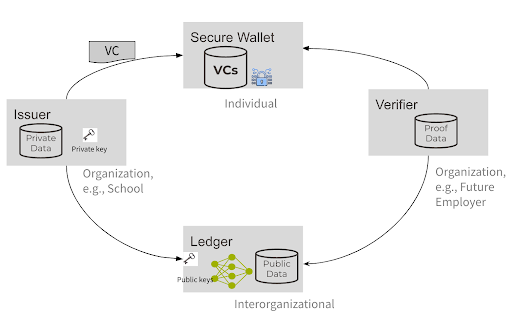

Switzerland’s E-ID journey so far In 2021, the Swiss E-ID law proposition was rejected by a public referendum. The reason for the refusal was due to privacy concerns in the implementation and management of that system. In a nutshell, the idea that a private entity would be in control of users’ data was frowned upon. (…)

Switzerland’s E-ID journey so far In 2021, the Swiss E-ID law proposition was rejected by a public referendum. The reason for the refusal was due to privacy concerns in the implementation and management of that system. In a nutshell, the idea that a private entity would be in control of users’ data was frowned upon. (…)

[Lang: Fr] [letemps.ch] Une Interview de Max Schrems pour Le Temps, à l’occasion de son passage à l’EPFL.

May/June [tbc}, 2024, 10h00-12h00online Introduction The adoption of multicloud architectures is a strategic response to evolving organizational needs in today’s digital landscape. Motivations for this transition include mitigating risks, such as disaster recovery and business continuity. Diversifying across multiple cloud providers enables organizations to weather potential service outages by seamlessly shifting services to maintain business (…)

The Open-Source AI Models track draws attention to the pivotal role that open-source AI models play in the responsible development of artificial intelligence and highlights the challenges that this field faces, including ethical and responsible usage of AI models, sustainability, and licensing and legal issues.

The AI Safety track addresses the pressing need for responsible AI usage beyond sensationalized risks. While global leaders address extreme threats, the track spotlights often-overlooked but crucial challenges, such as bias mitigation, individuals’ privacy protection, generation of inaccurate or fabricated information, and AI alignment with human values. It serves as a platform for experts from diverse fields to share insights, tackle challenges, and suggest solutions for a safer, human-centric, and trustworthy AI future.

By Melanie Kolbe-Guyot, Head of Policy, C4DT It is no secret that we are in the midst of an intense technological rivalry among the great powers of the United States, China, and the European Union; a rivalry that encompasses economic, security, and geopolitical dimensions. The development, control, and weaponization of digital technologies has become (…)

PeaceTech is the use of science and technology to prevent, reduce, or resolve violence and conflict, and to promote peace; emphasizing the need to avoid dual use of technology, where technologies can be used for harmful ends instead than for peaceful purposes. It encompasses research, development and application of STEM (Science, Technology, Engineering, Math to support strategies against violence.

Violence is considered not only in terms of war and armed conflict, but also violence striking civil society by any means – hate speech, cyberbullying, online mis- and disinformation, gender-based violence, and against minority groups.