A noteworthy development in the field of innovation sees EPFL, HEIG-VD and UNIL joining forces at the highest level, with the support of the Canton of Vaud, to unveil the outline of the [seal] Program. This initiative aims to stimulate collaboration between the three universities and accelerate the transfer of knowledge and technologies to the socio-economic fabric in the field of digital trust and cybersecurity. The launch of the Program includes a first call for projects dealing with cybercrime. Just as innovation drives our economy, digitalization drives innovation, and trust drives digitalization. In this context of the rapid digitization of our society, the notion of trust and security is at the heart of all concerns.

The Global Encryption Forum, which will be held at the unlimitrust campus on 19 October in Lausanne. Industry leaders, experts, and professionals (including EPFL Professors Jean-Pierre Hubaux and Serge Vaudenay), are brought together to delve into the intricate web of encryption’s economic implications in our interconnected world.

By Imad Aad, Technical Project Manager, C4DT *This blog post has been written as part of the Geneva Dialogue on Responsible Behavior in Cyberspace. Everyone can develop software, and the resulting quality can vary considerably. There is no single ‘right way’ to write code and reach a given goal. Numerous technologies exist with increasing (…)

The challenges faced by humanitarian organizations in general and by the International Committee of the Red Cross (ICRC) in particular are immense. Therefore, EPFL and ETH Zurich are joining forces with the ICRC through the Engineering for Humanitarian Action initiative to explore innovative solutions to such crises.

Do you ever code in modern Javascript? Then if you have multiple projects you are probably happy that nvm exists. Or maybe you’re more of a Python person? Then you must know about pyenv. Java? jenv or sdkman! Thing is, you often need to have multiple versions of a tool or binary installed when you (…)

The Trust Valley is pleased to invite you ONLINE on October 4th for the annual event of the Trust Valley.

An alliance for excellence supported by multiple public, private and academic actors, the “TRUST VALLEY” was launched on Thursday, October 8, 2020. Cantons, Confederation, academic institutions and captains of industry such as ELCA, Kudelski and SICPA, come together to co-construct this pool of expertise and encourage the emergence of innovative projects.

Please click below for more information.

Building on the expertise of 300 companies and 500 experts, the Vaud and Geneva Cantons of Switzerland are launching the Trust Valley, a public private cooperation for safe digital transformation, cybersecurity and innovation. Among the founding partners are C4DT members ELCA, Kudelski Group and SICPA.

For more information please click below.

The 3rd edition of the Crypto Valley Conference will be held on On June 11-12 in Rotkreuz, Switzerland, with two days of in-depth discussions on the current state and future of blockchain technology.

More information will follow shortly. To access the event’s website, click below.

The Crypto Valley is now connecting Zug with Romandie. The Valley is Switzerland in its integrality, and beyond. To celebrate this major step, the Crypto Valley Association is officially launching its Western Switzerland Chapter on December 02 at EPFL, Lausanne. Join them in order to meet the dynamic local blockchain and crypto community. During the event, a specific focus will be kept on crypto finance, digital assets and blockchain education.

December 2nd, 2019 @ 17:00-19:00, Polydôme EPFL

By Prof. Wei Meng, Chinese University of Hong Kong

Click is the prominent way that users interact with web applications. Attackers aim to intercept genuine user clicks to either send malicious commands to another application on behalf of the user or fabricate realistic ad click traffic. In this talk, Prof. Wei Meng investigates the click interception practices on the Web.

Tuesday July 23rd, 2019 @10:00, room BC 420

Citizens have a right to an explanation of the decisions affecting them. However, if AIs are to be used in decision procedures, how can we explain complex AI systems to the general public, while so many computer scientists find them inscrutably opaque? In this presentation, an optimistic approach to this seemingly hopeless question will be presented.

Inspired by the panel session at this year’s “AI House” panel session on “Transparency in Artificial Intelligence”, this write up very informally summarizes Imad Aad’s thoughts about transparency and trust in AI. It is aimed at readers with all backgrounds, including those who had little or no exposure to AI so far.

This project underscores the need for a paradigm shift in data privacy policies, acknowledging the inherent trade-off between data utility and privacy that current Privacy Enhancing Technologies (PETs) cannot fully mitigate. It highlights the limitations of PETs and the systemic responsibility issues within the data supply chain, where technology producers often evade accountability. Consequently, a shift towards a data-use case-centric evaluation framework is recommended, one that prioritizes utility while minimizing leakage through nuanced risk assessments. Finally, the porject calls for greater transparency and a redefined accountability structure in the data sharing ecosystem.

This book offers an in-depth, objective analysis of the three dominant regulatory models for digitalization—market-driven by the US, state-driven by China, and citizen-driven by the EU—and their global impacts on data, digital platforms, and the internet, highlighting the current geo-political struggles and possible future scenarios.

The slowdown in Moore’s Law has pushed high-end GPUs towards narrow number formats to improve logic density. This introduces new challenges for accurate Deep Neural Network (DNN) training and inference. Our research aims to bring novel solutions to the challenges introduced by ubiquitous ever-growing DNN models and datasets. Our proposal targets building DNN platforms that are optimal in performance/Watt across a broad class of workloads and improve utility by unifying the infrastructure for both training models and inference tasks.

The C4DT Factory team selected some Privacy Enhancing Technology (PET) links for you. They are all related to digital trust: security, privacy, trust in general, we have you covered!

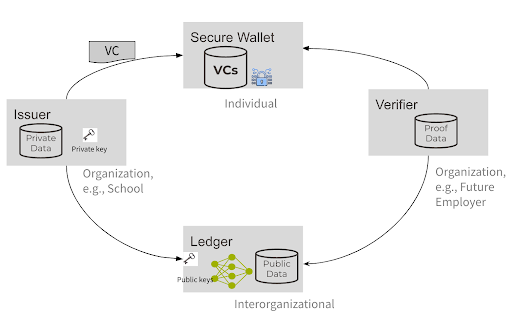

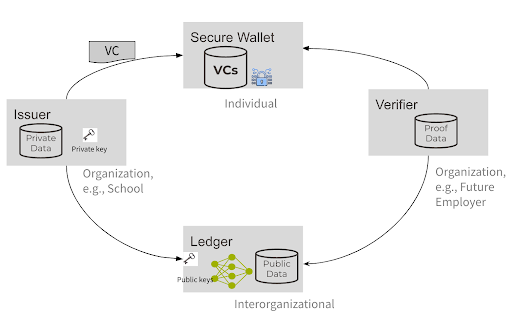

Switzerland’s E-ID journey so far In 2021, the Swiss E-ID law proposition was rejected by a public referendum. The reason for the refusal was due to privacy concerns in the implementation and management of that system. In a nutshell, the idea that a private entity would be in control of users’ data was frowned upon. (…)

Switzerland’s E-ID journey so far In 2021, the Swiss E-ID law proposition was rejected by a public referendum. The reason for the refusal was due to privacy concerns in the implementation and management of that system. In a nutshell, the idea that a private entity would be in control of users’ data was frowned upon. (…)

May/June [tbc}, 2024, 10h00-12h00online Introduction The adoption of multicloud architectures is a strategic response to evolving organizational needs in today’s digital landscape. Motivations for this transition include mitigating risks, such as disaster recovery and business continuity. Diversifying across multiple cloud providers enables organizations to weather potential service outages by seamlessly shifting services to maintain business (…)

The Open-Source AI Models track draws attention to the pivotal role that open-source AI models play in the responsible development of artificial intelligence and highlights the challenges that this field faces, including ethical and responsible usage of AI models, sustainability, and licensing and legal issues.

The AI Safety track addresses the pressing need for responsible AI usage beyond sensationalized risks. While global leaders address extreme threats, the track spotlights often-overlooked but crucial challenges, such as bias mitigation, individuals’ privacy protection, generation of inaccurate or fabricated information, and AI alignment with human values. It serves as a platform for experts from diverse fields to share insights, tackle challenges, and suggest solutions for a safer, human-centric, and trustworthy AI future.